I first came face-to-face with what it means to have your digital privacy violated when I was 13 years old. It was Grade 8 and the Covid-19 restrictions had just been lifted. Students were finally allowed back to school, but not exactly as before. Now it was a “hybrid” model that involved plenty of standing around spaced six feet apart. One day I was socially distancing myself from my classmates in a schoolyard line-up when the student behind me leaned forward and tapped me on the shoulder to ask if I’d seen “the videos”. Equal parts curious and confused, I said I hadn’t and took a look at her phone. On screen was another girl from my grade – a girl who was standing just a space ahead of us in line with a hoodie pulled tight over her head. In the video, however, she was dancing topless in her bedroom.

The girl, I later discovered, had been part of a small group on Snapchat which had degenerated into an online game of truth-or-dare as the boys goaded the girls into sharing nudes of each other. The lighthearted joking quickly turned into something much worse as the videos took flight. Soon the images had been distributed far beyond the initial group, first to our whole grade – and then to other middle and high schools in our district. Judging by her furtive behaviour on the playground, the girl was intensely embarrassed.

By the next week, everyone I knew had seen the videos. Some even saved them for “leverage” later on. Yet no teacher at our school ever addressed the issue publicly, nor was there a schoolwide conversation about consent or online hygiene. The moment eventually disappeared in a way that digital images never do.

The most unsettling thing for me wasn’t the images themselves. It was how the entire situation seemed so ordinary. To everyone else, it was just another thing that happens on Snapchat. Something to share, like the rest of our lives. When everyone is watching, there’s no such thing as privacy.

Looking back, it’s easy to point fingers. The girl who took the video of herself should have known better. The same goes for her “friends” in the group who encouraged her behaviour. And especially those outside the group who broadcast the evidence far and wide. They all should have known better. Been more careful. Been more aware. These arguments certainly hold weight. The digital world is a dangerous and unforgiving environment, one that requires caution and circumspection. And Gen Z should know this better than anyone else. We are, after all, the first generation raised entirely in the digital age.

But is that all there is to it? How can 13-year-olds be expected to grasp the permanence, amorality and coerciveness built into systems designed for impulsive sharing? Don’t adults – parents and governments especially – have a role to play?

The digital world is a dangerous and unforgiving environment, one that requires caution and circumspection. And Gen Z should know this better than anyone else. We are, after all, the first generation raised entirely in the digital age.

That incident five years ago had a big impact on my personal relationship with digital media. Now in university, I have become much more aware of my own privacy – and the regulations, politics, business interests and personal choices that are involved. As Canadians, we are often told that privacy is a fundamental right, like the right to free speech or religion. But in reality, this right is often treated as if it is up for grabs. Our privacy can be traded or taken away in exchange for all sorts of other things: fun, convenience, friendship, business intelligence, political advantage or sheer criminality. And if that’s the case, how can privacy be a right in any real sense of the word?

The Right to be Left Alone

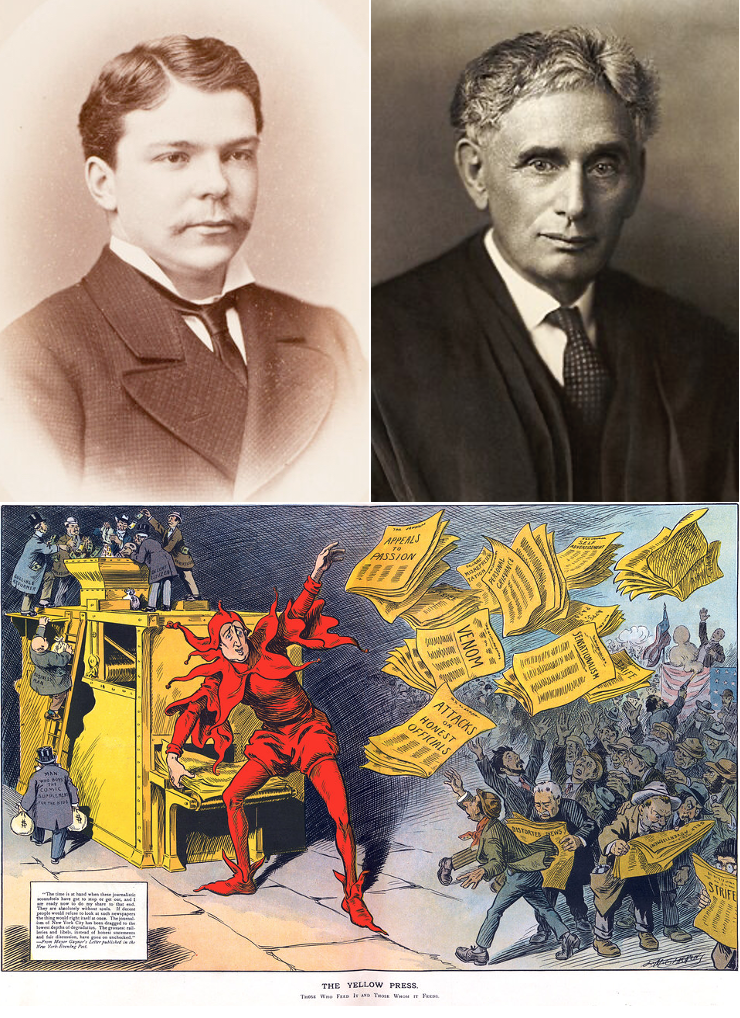

Among the most consequential law articles ever written is “The Right to Privacy” by Boston lawyer Samuel Warren and future U.S Supreme Court Justice Louis Brandeis in an 1890 edition of the Harvard Law Review. It is generally regarded as the first concrete, legalistic effort to grapple with what we mean by personal privacy in the modern sense.

Warren and Brandeis were writing in the era of “Yellow Journalism”. Technological advances in photography, printing and communications along with larger-than-life press barons such as William Randolph Hearst and Joseph Pulitzer spurred a raucous press that trumpeted sensational and salacious stories on their front pages and had no qualms about invading the privacy of American citizens to do so. “Gossip is no longer the resource of the idle and of the vicious, but has become a trade, which is pursued with industry as well as effrontery,” Warren and Brandeis wrote. “To satisfy a prurient taste, the details of sexual relations are spread broadcast in the columns of the daily papers…which can only be procured by intrusion upon the domestic circle.”

Despite their antique language, Warren and Brandeis make a powerful and relevant argument about “the right to be left alone” today. Our current problem, however, is not photographers hiding in the bushes outside our bedroom windows. Instead, our privacy is being invaded by friends and intimate acquaintances, as well as countless service providers and organizations with whom we voluntarily interact. We welcome them all into our own living rooms and bedrooms, using the platforms and apps that we willingly download onto our phones and other devices and then embed into our daily lives.

In the 21st century we are invading our own privacy. Surveillance has been repackaged and sold as convenience in the form of smartphones, smart home devices ranging from TVs to security cameras, and personalized feeds. The algorithm is now an entity that “personalizes” our experience and makes it almost impossible to stop.

As Jeffrey Orlowski points out in his documentary film The Social Dilemma, these platforms are “powered by a surveillance-based business model designed to mine, manipulate, and extract our human experiences at any cost.” And nearly everyone understands, at least in principle, that this is how the world now works. A 2022 survey by the Office of the Privacy Commissioner of Canada found that “91% of Canadians believe that at least some of what they do online or on their smartphones is being tracked by companies or organizations.” In our desire for connection, efficiency and belonging we are sharing our most important information voluntarily, if not willingly.

Beyond the role of social media in degrading personal privacy, there’s also the massive intrusion of state surveillance. From the proliferation of CCTV cameras to licence-plate identification systems and other digital surveillance technology, governments are rapidly expanding their data-gathering to keep an eye on us all. Officials often insist such tools are necessary to prevent crime or increase the public sector’s efficiency.

“If you have nothing to hide, you have nothing to fear,” claimed Conservative MP Richard Graham in defending the government of British Prime Minister David Cameron in 2015 when it introduced its Investigatory Powers Act. Known to critics as the “Snooper’s Charter”, this legislation has since given the British state a nearly unfettered ability to intercept and collect daily online communications among British citizens. This is how the world works now. While Canada may be a few steps behind the UK and the European Union, the Liberal government has been busily scheming to catch up, particularly given the vast surveillance powers included in last year’s Strong Borders Act, which remains a live piece of legislation.

Two of the most widely-referenced dystopian novels of the post-Second World War era are George Orwell’s 1984 and Aldous Huxley’s Brave New World. Orwell warned of a world in which citizens would be watched and controlled by an unseen but malicious Big Brother; Huxley’s vision was of a future in which the search for idle pleasure, via a fictional drug he called “soma”, would lead people to submit to state control on their own volition. Based on the evidence so far, it’s not clear which author got it right. The state observes us non-stop, as Orwell predicted, but we’ve also willingly ceded our privacy to the social media platforms that largely rule our lives and dole out tidbits of fleeting happiness, as Huxley might have worried.

What are the origins of the concept of personal privacy, and how has it changed since the 19th-century?

The modern legal understanding of personal privacy was established in an 1890 Harvard Law Review article by Samuel Warren and Louis Brandeis, who argued for the creation of a “right to be left alone”. The primary threat to personal privacy in their time was “Yellow Journalism” – mass-market newspapers owned by unscrupulous press barons that published salacious details about noteworthy individuals. The nature of privacy intrusions has changed dramatically since then. Today individuals voluntarily embed surveillance-based technologies – smartphones, home devices and apps – into their daily lives. In essence, we are colluding in constant invasions of our own privacy, as the personalized convenience of new technology makes it nearly impossible for users to opt out of a surveillance state run by Big Tech and facilitated by Big Government.

Privacy Law in Theory and Practice

Privacy law is supposed to protect people from the predations of government and business, as well as their own worst instincts. That’s the theory. But the results often disappoint. When it first came into force in 1995, the EU’s Data Protection Directive, later updated as the General Data Protection Regulation (GDPR), was widely regarded as the global gold standard for the protection of digital privacy. Today the GDPR offers consumers broad transparency in how their data has been collected and used and, via large fines, enforces an expectation of accountability on companies. This centralized and punitive model contrasts sharply with the U.S. approach, where personal data is generally protected at the state level and on a sector-by-sector basis. Canada’s Personal Information Protection and Electronic Documents Act (PIPEDA) of 2001 was largely based on the 1995 European model.

Most privacy laws rely heavily on the ‘informed consent’ model – the assumption that users understand how their data is collected and used and then make intelligent decisions about it.

But simply having a law on the books doesn’t mean your privacy is secure. “Privacy law looks to people’s expectations to set the limits of surveillance; yet over time, people become increasingly acclimated to being watched,” write Woodrow Hartzog, Evan Selinger and Johanna Gunawan in their 2024 article Privacy Nicks: How the Law Normalizes Surveillance. The authors are, respectively, a law professor at Boston University, philosophy professor at the Rochester Institute of Technology and computer science professor at Maastricht University in the Netherlands.

The “privacy nicks” of their title refers to repeated minor intrusions by government and business in what they call “blind spots” of existing privacy laws – often created by the arrival of new technologies – which slowly erode the very concept of privacy. Doorbell cameras, smart glasses and facial recognition devices at airports are all examples of privacy nicks, unanticipated by existing legislation that gradually wear away privacy standards.

Further, most privacy laws rely heavily on the “informed consent” model. This is the assumption that users understand how their data is collected and used and then make intelligent decisions about it. In reality, few individuals read, let alone comprehend, the lengthy “Terms & Conditions” they are constantly presented with. Among my Gen Z cohort, I think it’s safe to say everybody just scrolls to the bottom and clicks “I Agree”. Researchers from Carnegie Mellon University calculated it would take the average person 38 eight-hour days simply to read every privacy policy statement he or she encounters online in a year; across the entire U.S. that’s 54 billion hours of reading. This approach suggests the informed consent model is largely performative and does not provide meaningful choice or autonomy for the user.

Active participation in modern life now requires nearly constant digital engagement. Flying out of airports, applying for jobs, accessing government services, communicating socially, making many kinds of purchases, handling financial matters – all depend on digital platforms or devices that collect and process personal information. But when opting out becomes synonymous with social or economic exclusion, consent is no longer freely given. Surveillance is ubiquitous not because individuals no longer value their privacy, but because there is little room to refuse. Most people have come to accept these nicks and the accompanying risks as normal parts of life. Privacy is no longer a shield but a trade-off in negotiations most of us barely can remember entering into.

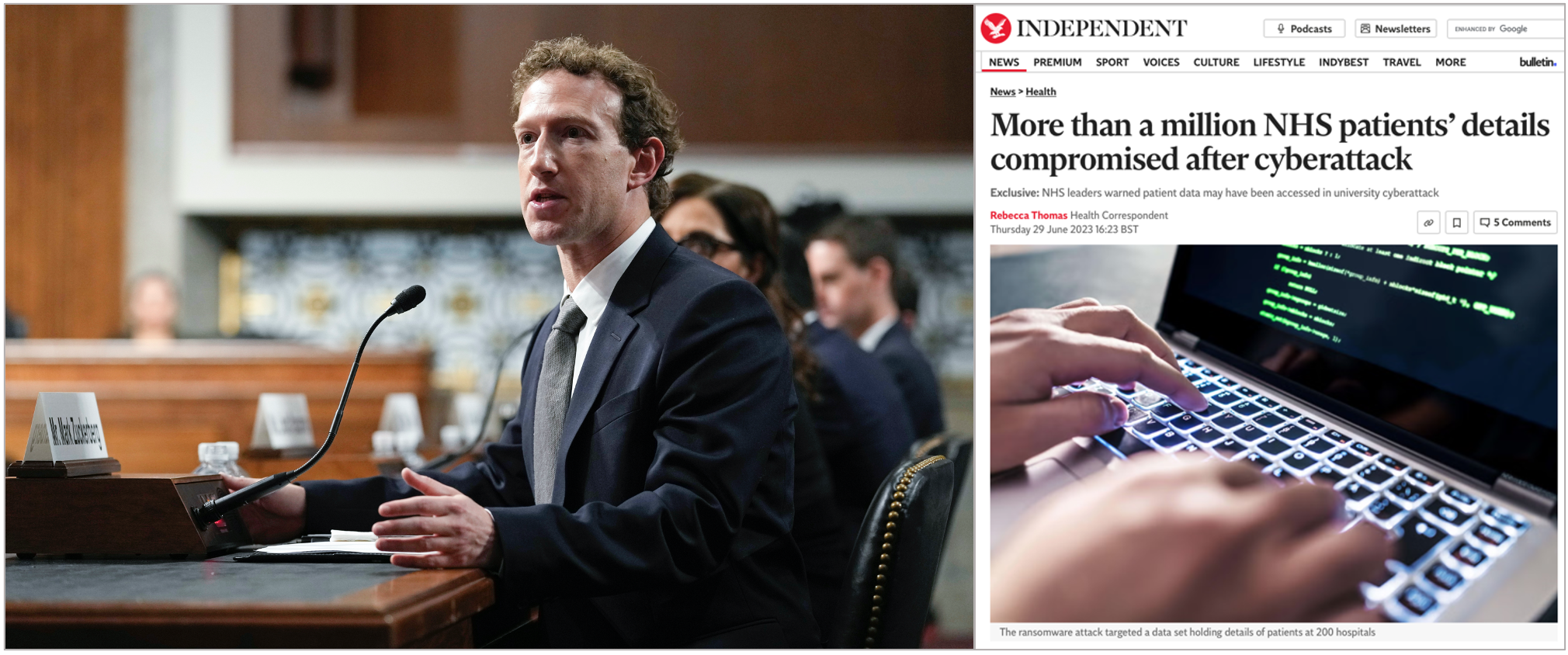

And while transgressors are supposed to be punished, the effectiveness of even large fines seems highly debateable. Since the EU’s GDPR came into effect in 2018, approximately €6 billion (Cdn$9.4 billion) in fines have been issued to companies for data-collection violations. But despite the prospect of massive penalties, there’s no evidence that individual privacy has improved substantially or that the problems are about to stop. An avalanche of data breaches, intrusions and privacy violations still occur on a disturbingly regular basis.

In 2025, for example, Google was caught misusing electronic cookies by inserting advertisements disguised as emails into users’ gmail accounts without their consent. That brought a €325 million (Cdn$510 million) fine from France’s Commission Nationale Informatique et Libertés, which oversees online privacy. Google had already been fined €100 million in 2020 and €150 million in 2021 for related violations. Then in 2023, Facebook owner Meta was fined €1.2 billion (Cdn$1.9 billion) for transferring private information from Europe to the U.S. in contravention of the GDPR.

But even these seemingly large sums are mere back-eddies amidst the torrent of funds generated in the digital world. Meta, for example, in 2023 generated gross earnings of US$58 billion. So the overall message seems clear: when the potential profits are sufficiently vast, penalties become just another cost of doing business.

And the threats to privacy go well beyond the tech sector. In the United Kingdom, major cyberattacks on the National Health Service have released sensitive patient data as well as other classified information. Universities and other institutions across North America have also suffered large-scale data breaches. In 2025, hackers accessed Princeton University’s database containing personal information on staff, alumni and donors. And this year in Mexico, a hacker used AI to steal 150 gigabytes of government data, including taxpayer and voter records, government employee credentials and civil registry files. It’s not just greedy corporations that pose a problem to our personal privacy; incompetent public institutions are another, enormous weak zone.

Why is the “informed consent” model for digital privacy a failure in practice?

The informed consent model relies on the assumption that users will read, comprehend – and potentially act upon – all the “Terms & Conditions” they encounter on all the digital platforms they use. Yet research from Carnegie Mellon University indicates it would take an average person 38 eight-hour days to read every privacy policy they come across online each year. In reality, most users simply scroll to the bottom of these often-lengthy documents and click “I Agree” without any meaningful understanding of how their data is being harvested or used. Further, digital engagement has become a requirement for many essential activities, including accessing government services, applying for jobs, navigating airports and many more. Because opting out to protect one’s privacy can lead to social or economic exclusion, digital literacy is essential for individuals to understand how their personal data is collected, sorted and sold.

Ongoing Indecision in Canada’s Digital Landscape

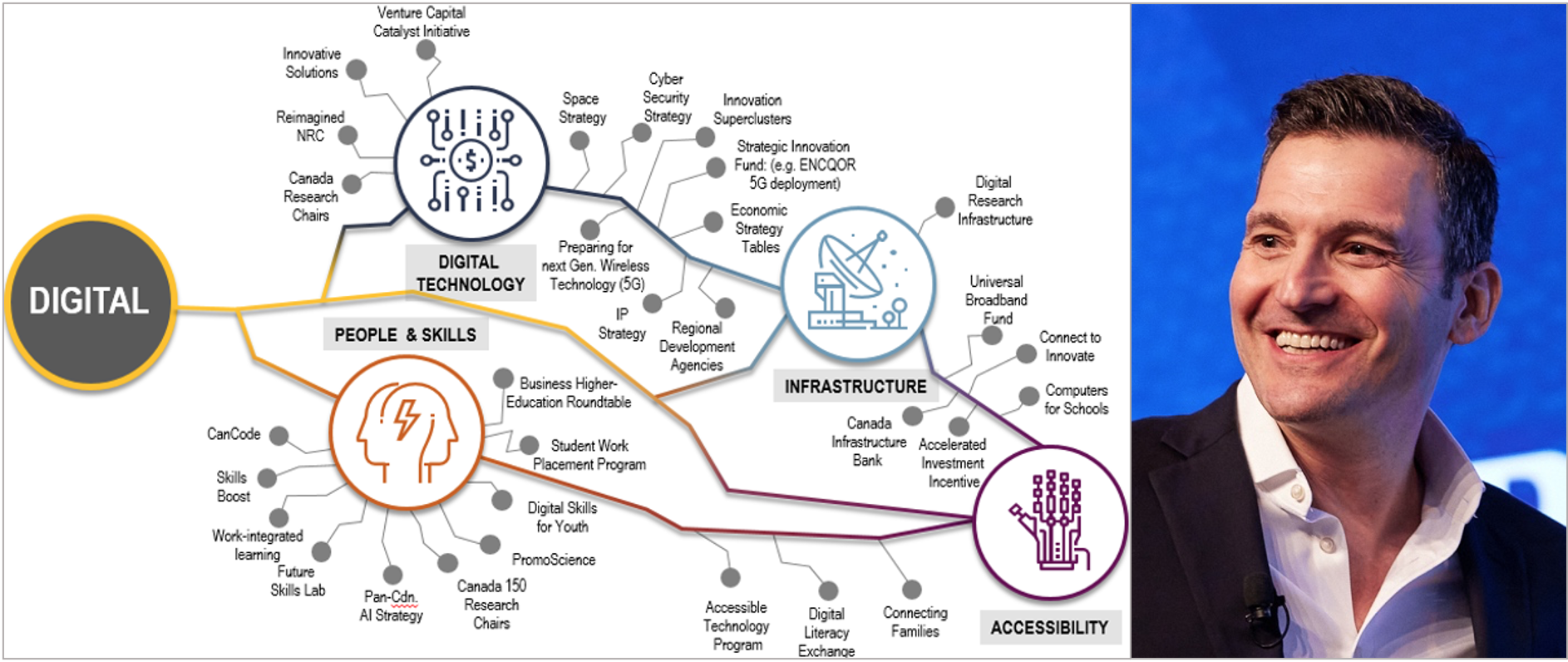

At home, Canada has utterly failed to keep pace with the rapidly changing technology landscape. When PIPEDA was introduced in 2001, it was considered state-of-the-art for its mimicry of the EU model. But that was six years before Apple introduced the original iPhone. Today PIPEDA is hopelessly outdated in a world of Instagram (2010), SnapChat (2011) and TikTok (2019), to name just three prominent social media platforms. The recent rise of AI creates many additional challenges, such as its ability to re-identify formerly public data that had supposedly been anonymized.

The federal government has made several half-hearted attempts at bringing Canada’s privacy legislation into the modern app era. Bill C-11, aka the Consumer Privacy Protection Act, was introduced in 2020 by the Justin Trudeau government to replace and update PIPEDA. It was heavily criticized by privacy experts as being toothless – especially for failing to properly grapple with the issue of meaningful consent. It died on the Order Paper.

In 2022 the Trudeau Liberals introduced Bill C-27, another proposed overhaul of Canada’s privacy law. This was basically an updated version of the earlier Bill C-11 with a few bonus features, such as explicit protection for minors and a “Digital Charter” that laid out expectations for the privacy rights of citizens. While these were welcome improvements, the bill didn’t go far enough. And bolted onto it was an entirely new piece of legislation called the Artificial Intelligence and Data Act that dealt with a very different issue.

The two-headed Bill C-27 was studied exhaustively at the committee stage in the House of Commons, with more than 30 hearings over two years, mostly to negative reviews. In its written submission, the Canadian Civil Liberties Association complained the bill “inappropriately frames people’s privacy rights as something to be balanced against and placed below commercial interests.” Others criticized the dual nature of the bill. Bill C-27 was also allowed to die a quiet death when Prime Minister Mark Carney called last year’s election.

Evan Solomon, the federal Minister of Artificial Intelligence and Digital Innovation, says he intends to bring back the concept of a Digital Charter at some point. His job title and recent comments, however, suggest he’s much more interested in unleashing the economic potential of AI than in closing loopholes in Canada’s ancient digital privacy regime. In an interview with tech news site Betakit, Solomon said he was wary of “over-indexing” on privacy regulations that might get in the way of reaping AI’s vast benefits. It can be argued, though, that these two interests are not merely competing for Solomon’s attention but are diametrically opposed, given the latest AI models’ voracious appetite for data of all kinds. This bodes poorly for Canadians hoping for swift passage of a tough new digital privacy law.

What are the specific concerns regarding how Canadian political parties handle the private data of voters?

Under Bill C-4 (which was before the Parliament of Canada as of March 2026), registered political parties will be permitted wide latitude in how they collect and use the personal information of Canadian voters by exempting them from federal and provincial privacy laws. This information includes highly sensitive data regarding ethnicity, religion, income, political views, as well as social media identifiers. And it may be collected without the knowledge or consent of the individuals involved. Furthermore, political parties are not obligated to show their collected information to the citizens they are monitoring, or to correct any errors. Because Canada’s major federal parties rely heavily on micro-targeting and data-rich voter analytics in their campaigning strategies, they are reluctant to promote stronger privacy laws that would apply to their own activities.

If You Can’t Trust a Politician, Who Can You Trust?

While we await another new privacy bill from Ottawa, the biggest current legislative concern among privacy advocates is Bill C-4. Curiously enough, this bill initially appears to have nothing to do with privacy. Its full title is An Act respecting certain affordability measures for Canadians and another measure. In introducing the bill in the House of Commons, Finance Minister François-Phillipe Champagne explained how it legislates an income tax cut as well as a reduction in GST on new homes and the elimination of the consumer carbon tax. “Overall, the measures included in this bill will pave the way for economic growth in Canada,” Champagne boasted.

Instead of abiding by federal or provincial privacy legislation, Bill C-4 would permit political parties to set their own privacy rules.

Something else it will do: legalize the misuse of Canadians’ private data by political parties. That mysterious “another measure” in the bill’s title exempts registered parties from complying with federal or provincial privacy laws. Further, all parties (the Conservatives and NDP support this measure) are exempt from showing any of the information they collect to the people they are monitoring, or to correct any errors – a clear violation of current legislation. What’s more, these changes are to be made retroactive to 2000, before the now-outdated PIPEDA was introduced.

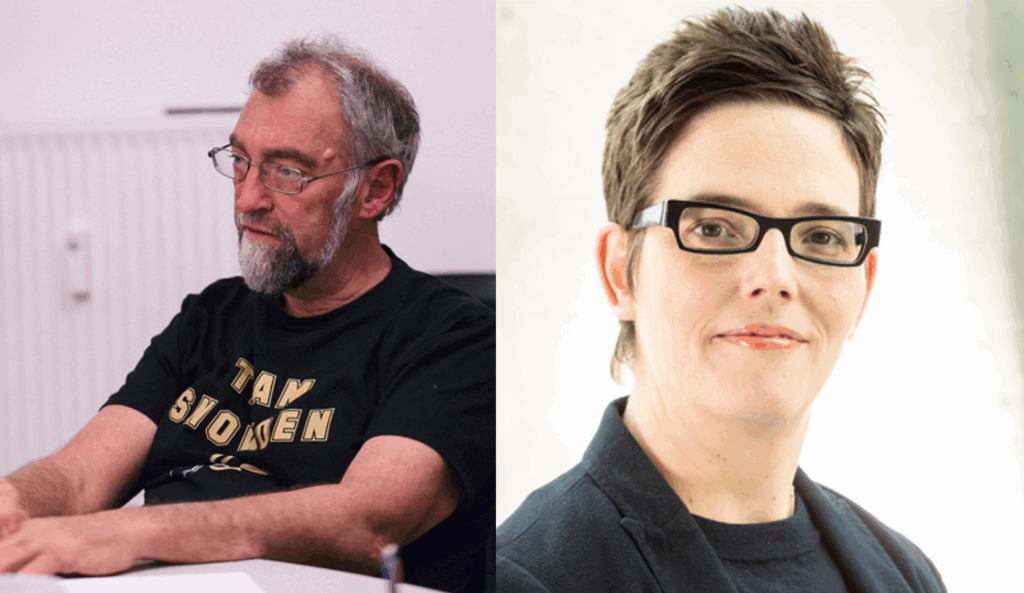

Instead of forcing political parties to abide by federal or provincial privacy legislation, Bill C-4 would permit them to set their own privacy rules. The reason for this strange state of affairs, explains Andrew Clement, a professor emeritus at the University of Toronto and collaborator with the Centre for Digital Rights, is that political parties have an appetite for data that is almost as voracious as AI’s.

“Federal parties rely heavily on voter data analytics and micro-targeting,” says Clement in an interview, which require plenty of information about individuals and their personal behaviour and preferences. Because the two main parties have become so deeply invested in data-heavy campaign strategies – Clement refers to them as “data-traffickers” – both are reluctant to promote stronger privacy protection that might be applied to themselves. Clement has personally been involved in legal challenges regarding the way political parties use voter data since 2019.

A 2025 analysis of Bill C-4 by Sara Bannerman, Canada Research Chair in Communication Policy and Governance at McMaster University in Hamilton, Ontario, backs Clement’s observations. Bannerman writes that the bill allows “political parties [to] collect highly personal data about Canadians without their knowledge or consent…which can include political views, ethnicity, income, religion or online activities, social media IDs, observations of door-knockers and more.” In separate research published in the Canadian Journal of Political Science, the majority of respondents Bannerman surveyed opposed political parties collecting and retaining any of the information listed above.

Matt Hatfield, executive director of OpenMedia, another Canadian digital privacy lobby group, observes that the Liberals and Conservatives routinely compete with one another in expanding their collection, analysis and use of voter data in a “tit-for-tat” manner. “They keep pushing boundaries, always testing,” Hatfield says in an interview, “One day, someone’s going to cross a line, and do something really bad, but until then they keep seeing how far they can go.” He notes the NDP is similarly engaged in this sort of data trafficking, but the party’s limited resources have similarly limited its ability to invade voters’ privacy. As for the risks entailed, in 2025 the federal Conservative Party suffered a major data breach involving the financial records of numerous MPs and candidates.

My own experience with the sting of lost privacy has led me to adopt a series of intentional habits…These actions aren’t revolutionary, but at least they give me some agency over systems designed to strip it away.

While the political data privacy file is just one slice of the massive data intrusions regularly perpetrated by corporations and government organizations, it reveals much about the true motivations of the politicians in charge of making the rules. In short, most of them couldn’t care less about your personal privacy.

Regaining Personal Agency, or Sticking it to the Algorithm

Warren and Brandeis summarized their original conception of privacy in 1890 with the remark that, “Some things all men alike are entitled to keep from popular curiosity.” So how can Canadian men and women today revive their long-lost right to be left alone? Both Clement and Hatfield emphasize the importance of individual and collective action in some combination. Citizens often forget the power they hold, the two privacy campaigners suggest. Consumers can demand transparency from platforms they use, choose platforms that prioritize privacy, and speak out against invasive data practices by political campaigns. Awareness itself can be a powerful tool. Understanding how algorithms operate and how data is trafficked can change our behaviour in subtle but meaningful ways.

At a personal level, my own experience with the sting of lost privacy in Grade 8 has led me to adopt a series of intentional habits. I disable the personalized settings on apps whenever possible. I review the privacy settings on all social media platforms I use. I rely on encrypted messaging for sensitive conversations. I avoid granting unnecessary app permissions. And I regularly delete my data history. These actions aren’t revolutionary, but at least they give me some agency over systems designed to strip it away. And it’s not just me. Gen Z writer Radmila Yarovaya wrote recently in The Globe and Mail about my generation’s growing preference for online pseudonyms to protect our identity from corporate snoopers.

How might this attitude change things going forward? Among thoughtful members of Gen-Z, there is a sense that the next generation must be raised differently. When we talk about becoming parents at some point in the future, I see a commitment among my peers to insisting on greater digital literacy, transparency and healthy screen-time for our children. Having experienced the internet at its most unregulated and unhealthy, we are acutely aware of how bad it can get.

As for our parents, to be fair they were the first generation to witness such a rapid technological transformation within a single lifetime; they had to make it up as they went along. Now we know better. Young people should not be taught to fear the internet. But neither should they be left alone. Rather, they must learn to understand it. Digital literacy – knowing how platforms function, how algorithms are synonymous with behaviour-shaping, how personal data is mined and harvested – has become a basic skill all parents must impart, just like learning how to cross the street, or saying “please” and “thank you”. The digital world is not optional: the realistic, smart and competent approach is to arm your children with the necessary knowledge.

And while personal choices will always lie at the heart of the privacy debate, as Hatfield explains, “There’s only so much we can do alone. We must also rely on the state to protect our rights.” Some things only governments can do, and Canada clearly needs an updated privacy law. Getting there, however, will require a government prepared to view digital privacy as a fundamental right that applies in all circumstances – including when the folks collecting our data are political parties.

It is time for privacy to be treated as the fundamental democratic right we have always been told it is. To do this, it must be defended by an informed and engaged citizenry supported by accountable and reliable institutions. Neither can do it alone.

Jessica Nwaefidoh is a first-year political science student at Wilfrid Laurier University in Waterloo, Ontario. This is an edited and expanded version of her second-place-winning entry in the 3rd Annual Patricia Trottier and Gwyn Morgan Student Essay Contest.

Source of main image: ChatGPT.