Prime Minister Justin Trudeau seems intent on transforming Canada into a “utopia” of his own imagining. The changes he is contemplating will be dramatic and sweeping, affecting the life of every Canadian for decades to come. They will make virtually everything that isn’t government-subsidized more expensive – transportation, home heating, home construction, food, clothing, consumer goods – and will determine which jobs and industries exist and which don’t. The scope and magnitude of the government-mandated changes contemplated for Canadian society are unprecedented.

Curiously, Trudeau has no explicit mandate from Canadians to make such sweeping changes. He did not campaign in the last election on a $170-per-tonne carbon tax – never mind even higher ones that are likely – nor any of the related measures being mooted for this transformation. And his party garnered neither a majority government nor even a plurality of votes. It is all-but certain, however, that the coming election will feature climate change as a central issue.

The rationalization for this transformation centres on science. More specifically, the “settled” science asserting that CO2 emissions generated by human activities, primarily the burning of fossil fuels, are the foremost determinant of atmospheric temperature and, from that, the global climate. From these “scientific facts” follows the political (or moralistic) imperative that if these human-caused or anthropogenic CO2 emissions are not reduced to effectively zero by 2050, a climate apocalypse will be triggered that will render the Earth uninhabitable.

Because the legitimacy of the Trudeaupian project to upend Canadian society rests on the claimed authority of science, it is essential that Canadian voters are equipped to critically assess the validity of the underlying scientific assertions. This does not require a PhD in climatology – or any discipline. After all, neither Trudeau nor his various ministers responsible for climate change had any significant scientific education beyond high school. Yet all claim to understand it; they certainly “believe in it” and seem to have no reservations about lecturing – or hectoring – the rest of us about it.

If you can’t perform repeatable experiments to test the theory, or if the predictions of the theory are such that they cannot be falsified, then it is questionable whether what is being done is actually science, and any claims made regarding this ‘science’ should be treated with skepticism.

What a person does require for a critical assessment of climate change, or any other public policy drawing its authority from science, is an understanding of some fundamental aspects of science: what it can and cannot do, what the scientific method’s strengths and weakness are, and the influence of human frailty on the integrity of the scientific process and the findings of research projects.

Things We Need to Know about Science

Following are ten characteristics of science that people need to keep in mind when assessing claims made in the name of science, together with an indication of each item’s relationship to climate change.

1. “A basic tenet of science is that you can do repeatable experiments.”

Repeatable experiments are an undisputed pillar of the scientific method, as affirmed by the above quotation from James Gunn, Professor of Astronomy at Princeton University, as cited in this article in Science. Experiments are conducted to test a specific prediction made by a theory. Repeatability – or replication as it is sometimes called – means that other scientists in other places at other times are able to perform the experiment in exactly the same way and are able to get the same results – to replicate the findings.

Repeatability is essential in order to ensure that the results are valid and not the result of an honest mistake, an intentional misrepresentation or even an outright fraud in the original experiment. According to the famed philosopher of science, Karl Popper, predictions made in a scientific theory should also be falsifiable. This means that a properly designed experiment must offer not only the possibility to confirm the theory, but also to demonstrate it to be false. This is the way science works (more about this later).

And yet, science as practised today is full of non-replicable experiments. Non-replicability is recognized as a “crisis” and while more common in the social sciences and medicine, is also present in the natural sciences. One recent study found that non-replicability ranged from 38 percent “among Nature/Science publications” all the way to 61 percent in psychology. Perversely, this study also found that “papers that fail to replicate are cited more than those that are replicable.” This is not only a crisis within science, because the proliferation of bad science further complicates the learning process for interested laypeople.

Controlled (repeatable) experiments to test the anthropogenic global warming (AGW) hypothesis directly are not possible because the system under test, the global climate, is so complex and chaotic that it is impossible to independently control all the relevant variables – assuming these variables are even known. Proponents of the AGW hypothesis rightly note that the radiation absorption properties of the CO2 molecule have been measured using repeatable experiments. These, however, do not test a prediction of the AGW hypothesis. Rather, they test the premise that, if CO2 is the primary determinant of atmospheric temperature, then the CO2 molecule must absorb heat. These experiments show that it does.

Concluding on this basis that CO2 is the primary determinant of atmospheric temperature is, however, a formal logical fallacy. To go from these measurements to the latter position requires a lot of extrapolation, inference and speculation that is not supported by repeatable experiments. It would be analogous to confirming Newton’s laws of motion in the lab (which every physics student does at some point) and then concluding it is possible to make anything go faster than the speed of light if you simply apply a force for a sufficient length of time. While the lab experiments are repeatable, the conclusion is wrong because not everything has been taken into account.

2. Not everything called science is science.

Some disciplines, such as physics, attempt to understand the basic dynamics of the universe and can use repeatable experiments to do so, because the universe behaves consistently in space and time. Other disciplines attempt to reconstruct history. Reconstructed history is not testable by controlled (repeatable) experiments. It relies, instead, on inference and speculation. So while this might look like science and be widely billed as science, it lacks critical characteristics of the scientific method.

As Michael Turner, a theoretical cosmologist at the University of Chicago, explains (quoted from the same Science article as the Gunn quote above), “The goal of physics is to understand the basic dynamics of the universe. Cosmology is a little different. The goal is to reconstruct the history of the universe.” The reason reconstructed history is untestable is that it consists of singular events that happened in the past.

Much of the claimed scientific basis for the AGW premise is of this nature. It relies on reconstructed history developed by inferences from measurements, not of actual temperature, CO2 concentration and time, but of so-called “proxies” for these parameters. Proxies are measurable aspects of various samples that can be collected today from which, using various assumed relationships, it is believed and hoped that accurate historical values can be calculated. One well-known proxy for age, for example, is counting tree rings.

These inferred values cannot, however, be tested by independent, repeatable experiments. All of the inferences are strongly dependent on the assumptions used for transforming the actual proxy measurements into temperature, CO2 levels and dates. For example, it is generally assumed that trees will produce one growth ring per year. It has been shown, however, by extracting samples from growing trees over an extended period of time, that under certain growing conditions trees can form more than one ring per year. Assuming a one-ring-to-one-year correspondence does not necessarily provide the correct answer. Indeed, the study linked to above concluded that, “It is abundantly clear, therefore, that growth rings cannot be used for precise dating of historical events if the trees grew in or near the forest border.”

The resulting inferred values are therefore uncertain, unreliable and arguably unscientific. Consequently, any AGW analysis should be limited to using data exclusively from the observational record gathered by scientific instruments. AGW assertions that are contingent upon data that precede the instrumented record should not be afforded much credence.

3. Science cannot prove a theory to be true, only false.

This may strike many people as surprising, even bizarre, but it’s true: the scientific method cannot conclusively prove any theory to be true, only that it is false. As Charles Bennett, Professor of Physics and Astronomy at John Hopkins University, wrote in a letter to the editors of the prestigious journal Science in 2011, “I find myself frequently repeating to students and the public that science doesn’t ‘prove’ theories. Scientific measurements can only disprove theories or be consistent with them. Any theory that is consistent with measurements could be disproved by a future measurement. I wouldn’t have expected Science magazine, of all places, to say a theory was ‘proved.’”

Bennett was writing to scold Science for having published an article with a headline that included the words “satellite proves Einstein right.” The editors not only published Bennett’s letter but affirmed his point, writing that, “Bennett is completely correct. It’s an important conceptual point, and we blew it.”

The data are more important than the hypothesis – or the hypothesizer. If the data are inconsistent with a hypothesis, they provide clear evidence that the hypothesis is false.

The inability of science to prove a theory correct is not as disappointing as it sounds, for science has been built around that fact for several hundred years. The normal scientific process tries to prove a new theory wrong. If it can’t do so, over many repeated experiments by many different scientific teams, and after much argument and discussion, then gradually a theory is accepted as true. But it is never seen as necessarily true, and it is always subject to reassessment and revision.

The best that science can do is provide evidence that is consistent with a theory. But this does not prove the theory to be true, since the data could also be consistent with some other theory, even if that theory has not yet been articulated. Evidence that is inconsistent with a theory, on the other hand, does indicate that the theory is wrong; if such evidence is itself credible – and repeatable – it can disprove a theory, conclusively and permanently. As consistent evidence accumulates with no inconsistent evidence being uncovered, one can have increasing confidence that a theory is correct, i.e., true. Still, one can never be certain.

A critical aspect of all of this is that the data are more important than the hypothesis – or the hypothesizer. If the data are inconsistent with a hypothesis, they provide clear evidence that the hypothesis is false. The classic example is that of the black swan. Based on empirical evidence, it was for centuries hypothesized – actually considered to be established fact or “settled science” – that all swans were white. Eventually, however, a black swan was observed. This single observation falsified the hypothesis and unsettled the science.

Many scientific observations have been made that are inconsistent with the AGW hypothesis. Consequently, it is wrong for anyone to claim that science has “proved” the AGW hypothesis true – even though that has been claimed innumerable times by politicians, activists, journalists and scientists themselves. This is because, first, science cannot ever prove a hypothesis to be true, and second, the existence of evidence inconsistent with a hypothesis indicates that it is false.

4. Truth is not decided by majority opinion – or “dogma and the intellectual chorus.”

Truth – scientific or otherwise – is not established by the number of people who believe something, even if they are said to be “a majority,” “large majority,” “virtually all” or “a consensus.” Nor is truth determined by the amount of money they spend, the positions of authority they hold, the intensity of their opinion, the number of documents they issue or the amount of energy they apply to denouncing their critics.

The vast majority of biologists once believed that “ontogeny recapitulates phylogeny,” that is to say, that the human embryo goes through (or recapitulates) various evolutionary stages, such as having gills like a fish, a tail like a monkey, etc., during the first few months that it develops in the womb. This was, however, based on a fraud perpetrated by Ernst Haeckel around 1874, who concocted or altered drawings of various embryos to provide apparent evidence of similarity. Although this fraud was suspected early on, the assertion continued to be made for over 120 years, until the fraud was unequivocally exposed in 1997 with the publication of embryonic photos by embryologist Michael Richardson. As Science reported at the time, “Using modern techniques, a British researcher has photographed embryos like those pictured in the famous, century-old drawings by Ernst Haeckel – proving that Haeckel’s images were falsified.”

Similarly, the vast majority of geneticists once believed that 98 percent of our DNA is junk with no function, simply because it doesn’t code for proteins. This is now acknowledged as being wrong. As reported on the Discover website, the Encyclopedia Of DNA Elements (ENCODE) has established that “80 percent of the genome has a ‘biochemical function,’” that “Almost every nucleotide is associated with a function of some sort or another,” and that “It’s likely that 80 percent will go to 100 percent.”

Another famous and important example is how essentially all medical professionals once believed that ulcers were caused by excess gastric acid in the stomach, often induced by stress. The correct cause was finally established when Dr. Barry Marshall induced the condition in himself by drinking a bacteria-laden solution, and then cured himself with antibiotics. Prior to this, as Kidd and Modlin put it, “Dogma and the intellectual chorus were in harmony advocating that gastric acid was critical in ulcer disease.” That phrase – “dogma and the intellectual chorus” – sounds eerily familiar today.

These examples illustrate that what is considered, by consensus, to be “settled science” at one time can be repudiated by science. In some cases, this can happen quickly; in others, the false theory maintains its grip on the scientific community and the popular imagination for a very long time. The key issue is that one cannot know for certain at any particular time which bits of currently “settled science” will be repudiated next week, month or year.

Consequently, we should not allow ourselves to be bamboozled by statements such as “Ninety-seven percent of scientists agree: climate change is real, man-made and dangerous,” even if they are made by a popular U.S. President as this one was. The truth of the hypothesis is not determined by the number or position of the people who believe it to be true – be they scientists, movie stars or presidents. (In any event, as explained by Ian Plimer, Professor Emeritus of Earth Sciences at the University of Melbourne, writing in The Australian, the 97 percent figure was generated by poor methodology in a study undertaken to achieve a non-scientific objective – swaying public opinion.)

From a scientific perspective, the truth or otherwise is determined by the data. In particular, whether:

- The data are the direct output of repeatable experiments that directly test the hypothesis;

- The experiments test the hypothesis in a manner that allows the hypothesis to be falsifiable; and

- Any of the data are inconsistent with the hypothesis.

Moreover, we should not allow, under any circumstances, claims that something is “settled science” to inhibit comprehensive and robust debate. Doing so is inimical to the whole philosophy of science. History has shown that it is only by means of unconstrained debate that “settled” but erroneous science can be corrected. The heliocentric-vs.-geocentric structure of the solar system is the archetypical example of this (every schoolchild learns of the trials of Galileo), but “phlogiston” being the source of fire and the atom being indivisible are two other examples of “settled science” that were demonstrated to be wrong after vigorous debate.

And if a theory really is rock-solid and supported by the vast amount of unimpeachable evidence that is claimed, then its proponents should see no harm in other scientists continuing to question the theory through additional observations and experiments. Their failures to disprove the theory, after all, would only further strengthen confidence in the theory.

5. Correlation does not mean causation.

From July to December 2008, both the S&P/TSX Composite Index and the temperature in Calgary dropped dramatically. There was a strong correlation in their behaviour. Neither one caused the other, however, nor were both caused by some third variable. The former was due to the global financial crisis precipitated by the sub-prime mortgage debacle in the U.S., and the latter was due to the normal changing of the seasons.

Earlier it was discussed how the empirical data observed in testing a hypothesis are the critical element in assessing its potential validity. Data, however, must still be interpreted within some sort of framework. They do not “speak for themselves.” Such a framework will be heavily influenced by one or more presuppositions – things that are just assumed to be true.

Perhaps the most pervasive unconscious bias is discussed in ‘The Trouble With Scientists,’ in which Brian Nosek of the University of Virginia talks about what he calls ‘motivated reasoning.’ In this process, a scientist interprets observations to fit a particular idea motivated, in turn, by personal considerations.

A non-science example of presuppositions at work can be found in a courtroom criminal trial. There is one set of data – the evidence – but two interpretations based on two different presuppositions. One is that the defendant is guilty; the other that the defendant is innocent.

Presuppositions exist in the scientific arena and often the scientist is oblivious to these, leading the researcher to unconsciously analyze the data in a particular way determined by his/her presuppositions. Perhaps the most pervasive unconscious bias is discussed in The Trouble With Scientists, in which Brian Nosek of the University of Virginia talks about what he calls “motivated reasoning.” In this process, a scientist interprets observations to fit a particular idea motivated, in turn, by personal considerations.

Nosek says one of the strongest distorting influences is the scientific world’s reward system that confers kudos, tenure and funding. As he puts it, “To advance my career I need to get published as frequently as possible in the highest-profile publications as possible. That means I must produce articles that are more likely to get published.” These, he says, are ones that report positive results – “I have discovered,” not “I have disproved” – original results – never “We confirm previous findings that” – and clean results – “We show that” not “It is not clear how to interpret these results.”

The problem, as Nosek explains in the book, is that “most of what happens in the lab doesn’t look like that.” Instead, it’s mush. So, he asks, “How do I get from mush to beautiful results?” The scientist is faced with a dilemma: “I could be patient, or get lucky – or I could take the easiest way, making often unconscious decisions about which data I select and how I analyze them, so that a clean story emerges. But in that case, I am sure to be biased in my reasoning.”

Many people are quick to assign such biases to researchers who dispute the AGW hypothesis, often referring to them derogatorily as “deniers.” Use of this term to silence dissent is an egregious, odious and downright malevolent “weaponization” of language. Every scientific advance has been the result of someone denying the contemporaneous “settled science.” “scientific consensus” or status quo. Galileo is probably the archetypical example, but even Einstein “denied” the veracity of well-established Newtonian physics.

The Intergovernmental Panel on Climate Change (IPCC) has its own presuppositional bias in favour of the AGW hypothesis. The IPCC website states that it “is the United Nations body for assessing the science related to climate change.” While this sounds innocuous, the IPCC operates under the United Nations Framework on Climate Change, Article 1.2 of which states that, “‘climate change’ means a change of climate which is attributed directly or indirectly to human activity,” and Article 2 of which states that, “The ultimate objective of this Convention…is to achieve…stabilization of greenhouse gas concentrations in the atmosphere at a level that would prevent dangerous anthropogenic interference with the climate system.” Clearly, the IPCC decided ahead of time that climate change is human-induced, that the cause is anthropogenic greenhouse gas emissions – principally from the burning of fossil fuels – and that the correct policy response is to reduce these emissions.

7. Disciplines are frequently captured by a ruling paradigm.

The term “paradigm,” according to the website of Simon Fraser University, “means a set of overarching and interconnected assumptions about the nature of reality…The paradigm itself cannot be tested.” Any discipline requires certain assumptions to function; the important question is whether its practitioners are open to reviewing and revising their assumptions in the face of powerful new information. In his famous 1962 book The Structure of Scientific Revolutions, the philosopher of science Thomas Kuhn coined the term “paradigm shift” to, as the Simon Fraser page puts it, “describe the process and result of a change in basic assumptions within the ruling theory of science.”

Unfortunately, many disciplines and organizations become resistant to evaluating their basic assumptions. As Kuhn put it, a “ruling paradigm” can take hold, forming an unquestioned framework for interpreting data within a particular discipline. Data inconsistent with the framework are typically disregarded. Sometimes they are treated as errors by the researcher. Sometimes they are dismissed because the researcher is judged not to have appropriate credentials. Sometimes they are dismissed because the researcher is suspected of unacceptable political leanings or has what are considered objectionable funding sources. If none of the preceding can be used, the data are grudgingly accommodated within the ruling paradigm by ad hoc ancillary hypotheses designed to preserve the ruling paradigm.

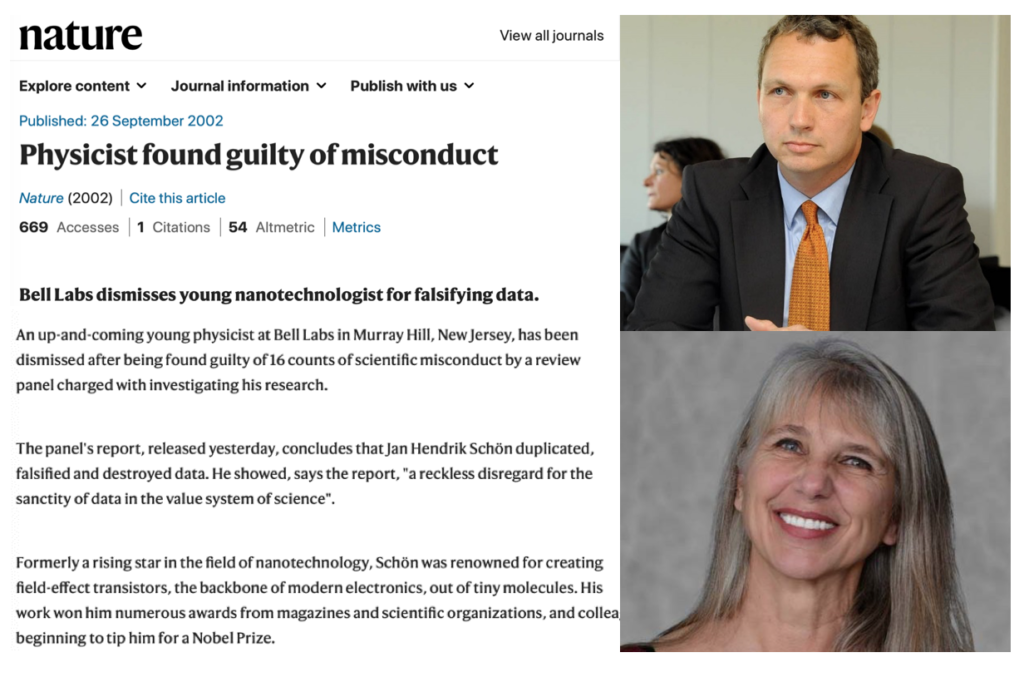

Jan Hendrik Schoen, a German physicist working at Bell Labs in New Jersey, ‘Published a slew of supposedly groundbreaking – but actually fraudulent – papers [in peer-reviewed journals]…including an eye-popping total of 16 published in two of the most prestigious journals, Science and Nature.’

In none of these instances are the data allowed to provide their real value: as indicators of deficiency in the prevailing theory.

Climate science has evidently been captured by the AGW paradigm – indeed, it is assumed by the IPCC – and challenges are deterred or crushed using the rationalizations listed above. Current climate science is rife with ancillary hypotheses seeking to explain and incorporate climate phenomena that depart from the main theory – such as cooler-than-modelled temperatures – when these observed results should be viewed as evidence undermining the main theory.

8. Publication in peer-reviewed journals does not guarantee veracity.

The authority of peer review is often treated with almost cult-like reverence, routinely being used as proof in itself and as a tool to forestall debate on a scientific topic. In an ideal world, of course, peer review serves as a reliable method of due diligence and, therefore, signals that a paper’s findings can be considered credible. This is important for interested lay people (including policy makers), who can’t be expected to understand and interpret raw scientific data on their own.

Unfortunately, we no longer live in such a world. Numerous bogus papers have been published in peer-reviewed journals. (See, for example, here (Nature), here (The Atlantic), and here (also The Atlantic).) Some of these were produced simply to test the process, demonstrating that it failed – which in itself should reduce the public’s reverence for peer review as currently practised.

But others were done with the hope of being accepted as legitimate and with the intention to deceive. In a particularly egregious case, as reported by Yahoo!Finance, Jan Hendrik Schoen, a German physicist working at Bell Labs in New Jersey from 1997 to 2002, “Published a slew of supposedly groundbreaking – but actually fraudulent – papers [in peer-reviewed journals]…including an eye-popping total of 16 published in two of the most prestigious journals, Science and Nature.”

Schoen appears to have bamboozled his colleagues and superiors simply by making exaggerated and inaccurate claims, using artificially concocted data, which confirmed certain latent theoretical predictions in an area of immense practical application, thereby creating enormous hype. He was finally exposed in 2002 when, because of the growing instances of identical data and graphs having been used in different papers about different topics, Bell Labs established a committee to investigate and he was subsequently sacked. In June 2004 the University of Konstanz in Germany revoked Schoen’s doctorate (he appealed but ultimately lost).

Peer review can also serve to enforce the orthodoxy of a ruling paradigm, either implicitly or explicitly. Wanting to ensure that their papers get accepted for publication, authors may well analyze their data only in terms of the ruling paradigm and find a way to make the data consistent with it, even when alternative explanations might fit the data better.

On the other side, reviewers might refuse to accept inconsistent data or explanations. As palaeontologist Mary Schweitzer, the discoverer of soft biological tissue in dinosaur fossils, recounted in Discover Magazine in 2006, “‘I had one reviewer tell me that he didn’t care what the data said, he knew that what I was finding wasn’t possible,’ says Schweitzer. ‘I wrote back and said, “Well, what data would convince you?”’ And he said, “None.”’” [Editor’s note: This quotation appeared in a photo caption in the print edition of Discover Magazine; it does not appear in the article’s online version.]

Outputs from predictive models should never be confused with actual, empirical measurements. These outputs do not constitute evidence resulting from repeatable experiments; multiple runs of a model with different values for the input parameters are not the same as repeatable experiments.

Many people attempt to suppress those who dispute the AGW hypothesis by questioning their credentials due, among other reasons, to their very lack of publication in “appropriate” peer-reviewed journals. But if the peer-review process is itself manipulated or the journals in question are biased, then such criticism becomes a self-fulfilling prophecy. The “Climategate” scandal of 2009-2010, for example, clearly revealed senior and influential members of the science community manipulating the peer review and publication process to suppress dissenting opinions.

In summary, we should not allow claims of “peer review” to intimidate us into unquestioning acceptance of any claim. The only things that should matter – inside and outside the scientific community alike – are the quality of the data (output from repeatable experiments) and the rigour of the analysis.

9. The image of the scientist as an unbiased, objective seeker-of-the-truth is a myth.

Scientists are just people and, just like other people, many are attracted to fame and fortune. Many more still depend utterly on grant money to survive, and the grant system favours certain viewpoints. As noted above regarding Schoen, some scientists are not above bending the truth or even outright fraud in order to achieve their goals.

10. Model outputs are not evidence.

An example of the former is the Bohr model of the atom that pictures the atom as an incredibly small analogue to the solar system, with the nucleus as the “sun” and the electrons as the “planets.” The Bohr model served as a very useful tool for understanding many aspects of atomic physics. An example of the latter are the models used in climate science that attempt to predict the atmospheric temperature 10, 20, 50 or 100 years in the future. Neither type of model, however, actually provides any new empirical evidence about the true nature of reality.

In particular, outputs from predictive models should never be confused with actual, empirical measurements. These outputs do not constitute evidence resulting from repeatable experiments; multiple runs of a model with different values for the input parameters are not the same as repeatable experiments. Models are attempts to depict reality, but whatever evidence they might contain (such as, for example, measured temperatures from the past) was separately gathered and was used in constructing or running the model (along with various assumptions, working premises, etc.). The outputs of models are not measurements, they are calculations.

Predictive models for complex reality are usually computer-based. Such models need to be verified and validated before they can be considered for investigative use. This is a complex technical process, with a number key steps, that culminates in validating the model by confirming that it can accurately reproduce existing empirical data – such as past temperature.

The fidelity of any predictive model – that is, the degree to which it is likely to correspond to reality, at least in a functional sense – can be assessed by the fidelity with which it can reproduce existing empirical measurements of the model’s output parameter and/or the accuracy with which its predictions agree with future measurements when these are made. To the degree that the science is truly “settled,” there should only be a single model for the phenomenon and that model should be able to accurately reproduce existing, or past, measurements of the output variable and provide predictions for any future time that are confirmed by measurements when that time comes.

In the case of climate change, there are many models, which in itself suggests that the science is not “settled.” Remarkably, none of these models provides a particularly high-fidelity fit to past temperature data. All of them have an abysmal predictive record, whether of events such as the disappearance of the Arctic sea ice or the submergence of low-elevation islands, or of atmospheric temperature values, particularly in the period 1990 to the present.

Accordingly, it is reasonable to be skeptical of those who claim to know what the atmospheric temperature will be in 100 years, or even 20 years, based on the output of models. Model output is not evidence of climate change. The disagreement between model output and empirical measurements is, however, evidence for the need to revise the model.

Conclusion

Despite this essay’s focus on AGW theory to illustrate the ten points about science, it is not intended to tell people what to think about climate change or any particular subject. There is far too much of that already coming from politicians, government officials, public intellectuals and activists, the news media and academe. Rather, it is intended to help improve people’s understanding of the scientific method and their ability to think critically for themselves when reading or watching programs about science topics or hearing of new scientific breakthroughs, when evaluating proposed new public policies that are said to be science-based, and when assessing claims that draw upon the authority of science.

One of these public policy areas – but by no means the only one – is the Liberal government’s plan for a major “reset” of Canadian society in pursuit of the utopian goal of “saving the planet” on the basis of a hypothesis that fails several of the key tests of the scientific method. To justify his proposed drastic restructuring of Canadian society, Trudeau needs to provide far more rationale than incantations such as “According to the IPCC” or “The UN says” or “Scientists agree that climate change is real.” In addition, he needs to provide a full, detailed accounting of the impact that his project will have on every Canadian. In assessing the prime minister’s claims, Canadians should do so with a full understanding of these ten fundamental aspects of science.

Jim Mason earned a BSc in engineering physics and a PhD in experimental nuclear physics, had a lengthy career with one of Canada’s leading defence electronics companies (for part of which he was Vice-President of Engineering) and is currently retired and living near Lakefield, Ontario.